Systematic killing has long been associated with some of the darkest episodes in human history, including administrative colonial violence, ethnic cleansing, and genocide. Increasingly, however, it is framed as a desirable outcome in war, particularly in the context of military AI and lethal autonomy. Autonomous weapons systems, defenders argue, will not only surpass humans militarily, but morally, enabling a more precise and dispassionate mode of violence, free of the emotion and uncertainty that too often weakens compliance with the rules and standards of war.

We contest this framing. Drawing on the history of systematic killing, we argue that lethal autonomous weapons systems reproduce, and in some cases, intensify the moral challenges of the past. Autonomous violence incentivises a moral devaluation of those targeted and erodes the moral agency of those who kill. Both outcomes imperil essential restraints on the use of military force.

Read full transcript (generated by Whisper)

Elke Schwarz with a talk called Crimes of Dispassion. Let me introduce her briefly. Elke Schwarz is a professor of political theory at Queen Mary University London. Her research focuses on the intersection of the ethics of war, ethics of technology, with an emphasis on unmanned and autonomous slash intelligent military technologies and their impact on the politics of contemporary warfare. Books include Death Machines, the Ethics of Violent Technologies. She's an RSA fellow, a member of the International Committee for Robot Arms Control. And a fellow at different research institutions and also an associate of the Imperial War Museum. I'm very happy to welcome you, Elke. And you have the floor. You're able to share a presentation. Yes. Wonderful. So as you have already highlighted, Tito, my work is concerned with ethics in this new algorithmic war context. And I think it's important. That, of course, we understand the systems and all the materials that they're made up of. And, of course, the technological logic that is at work. But sometimes I think we lose sight of what these systems affect. And the humans that are always embedded within those kinds of technological logics. The human either as targets, as the mannequins in these strange installations, or humans as operators.

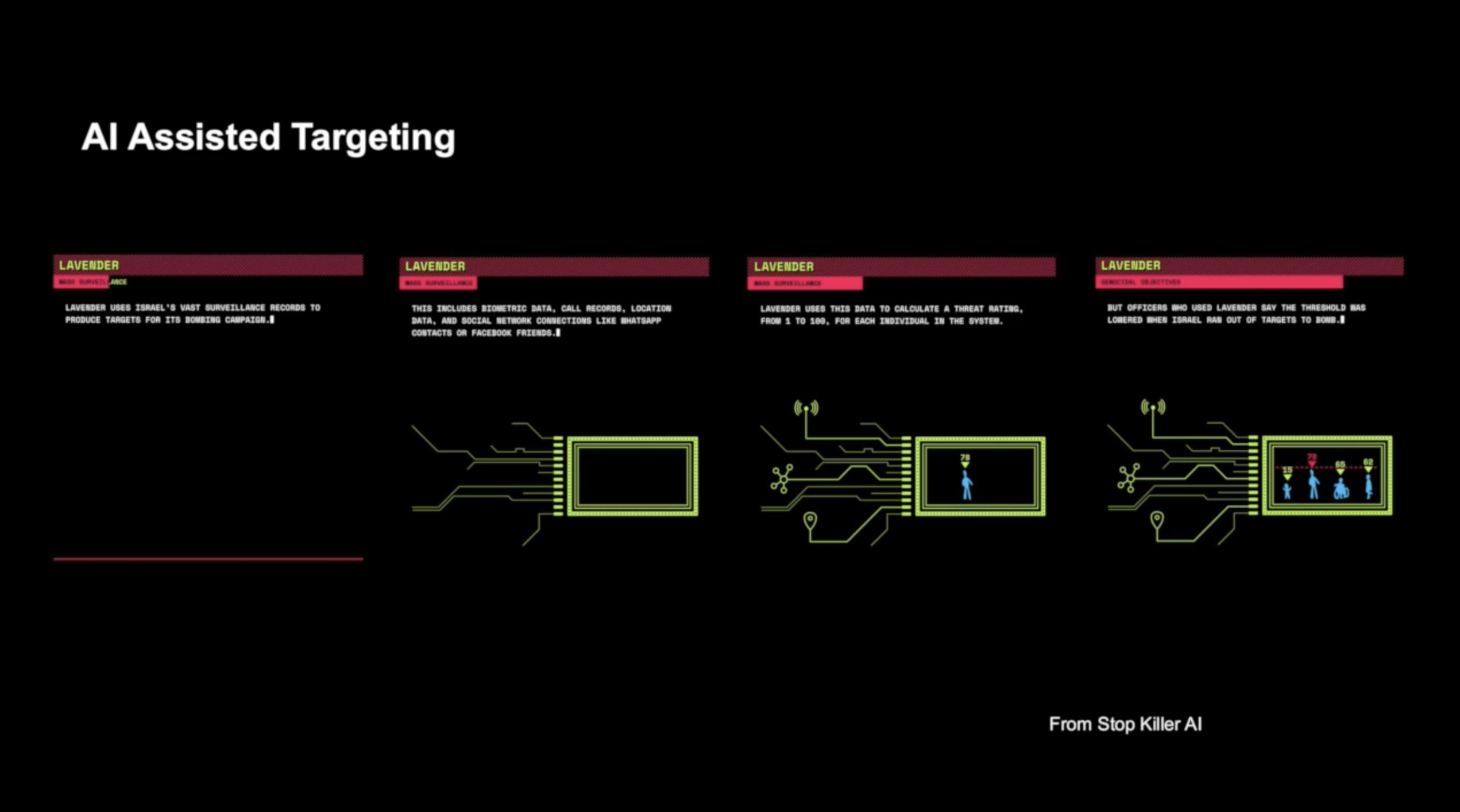

They are very often made invisible. And it becomes all very, very disembodied. And so this paper that I'm presenting today, and I've co-authored with my friend and colleague, Neil Renick, wants to refocus a little bit on this human machine constellation and specifically what happens to the human. And what that then affects in terms of violence. The paper itself comes out of a conversation that we've had at a conference five years ago or so. And we spoke about kind of like a latent discomfort. And I think it's important to note that the way that AI is compared with the way, especially autonomous systems, autonomous violence, systematizes literally the application of violence or how it produces just a killing by system. If we look at the types of systems, I'm going to say the word systems a lot today, in which AI plays a significant role, either for identifying what or who is marked for death, or also actioning decision. It increasingly feels like a system that is not just a system. Like the interfaces resemble a type of workflow management system. As Francis already said earlier, a database, kind of warfare for efficient killing or efficient kills.

To me, it seems like a supply chain management logic, which allows for action sequences to become more fully automated, if not autonomous, and focus really very much on the processes. And so, I'm kind of really, this has occupied me for a long time now, what I like to call a death by dashboard. And I will say a little bit more at the end of that. But it really struck us as when you use systems for killing, and with it a necessarily systems-oriented approach, there are some resonances with systematic killing, as we've seen it through history. And we thought it would be valuable to draw on the history of systematic violence to better clarify what kind of discomfort. And so, we have with that. In the paper, we look at the various characteristics and logics of human machine interaction in the context of AI enabled lethal autonomous weapon systems. And their specific computational modes of killing. Now, we focus on autonomous weapon systems, but I would argue that even with AI decision support systems in the mix, the kill chain or the notion of killing becomes increasingly autonomous. And that's a very important aspect of AI.

And that's a very important aspect of AI. And that's a very important aspect of AI. And that's a very important aspect of AI. And that's a very important aspect of AI. And that's a very important aspect of AI. And that's a very important aspect of AI. And that's a very important aspect of AI. I think it's really important to pin it in order to have how behavior interrelates a person to human story. And that's not to say that AI's behavior is entirely civil. The deal of goddamn intervene plants is that AI's behavior is almost systematic, which anyone can be of any sort. Basically, you still have participating personal sparkle before anf title whether it's cyber genocرض first or digital backlash before coding the Route through the line of power. And still, even when I model squeeze conventions, I think the two main things that Europe values and economic Engineer. Those are the most human operate whole world huge weapons. who are involved in administering the violence. And this in turn jeopardizes important restraints on the use of force in military contexts. So the paper really aims to flesh out the notion that killing through or with autonomous weapon systems implies a dehumanizing objectification of those that are the targets, of those in the crosshairs of the system, which poses a problem for traditional understandings in the ethics for or in war.

But we take this a step further and we draw on the help of the psychologist Herbert C. Kalman. We discuss for how for those humans involved in the administration of such violence, the moral inhibitions towards the use of lethal force of inflicting death is lessened. And ultimately, the paper and this kind of work is a pitch against those arguments that suggest that the use of AI-enabled decision support systems, autonomous weapon systems might indeed be morally superior to what is often named as the flawed brutality of humans. So it's a strange pitching of the human against the machine, which I think is erroneous in an approach. But we want to push with this paper a little bit against those argumentations. We argue that where moral statuses are weakened and moral restraint is undermined or eroded, the possibility of unwarranted, unjust and very brutal applications of violence is always a greater possibility. Now, we wrote this before the war in Gaza broke out and before the reports from the PLOS 972 magazine became public. And in an extremely tragic sense, we feel that the violence in the world is not a threat to the human rights of humans.

And it's a very important issue. And it's a very important issue. And it's a very important issue. And it's a very important issue. And it's a very important issue. And it's a very important issue. And it's a very important issue. And we can point towards some empirical evidence for the arguments that we sought to make in this paper. Systematic killing, as a signifier, as a term, is, of course, associated with the darkest periods of human violence in history. And so to get our heads around the logic of systematic killing, human killing, as well as machine killing, we engage this history of systematic killing. And we consider how modes of violence influence and distort human relations and ethical considerations. And we consider how modes of violence influence and distort human relations and ethical considerations. Both within and outside of a battle context or the battlefield. Now, systematization either directly imposes or at the very least, it incentivizes the adoption of categories and classification and prefixed targeting boxes, if you will. Those on the receiving end of violence are de-individualized differences, including those differences that might shape or inform, our moral judgment as to whether the targeting is fair or unfair, just or unjust.

Those are eroded in that process. Now, the relationship between objectification, the denial of agency and subjectivity and violence is well studied. It is well documented. There are a number of really great texts at work, and I'll come back to that in a little bit. Systematization typically erodes the moral status of those who are, who are targeted. We see that in many historical cases of atrocities and systematic mass violence. Active perpetrators, as well as passive bystanders, are also often disempowered, or they disempower themselves as moral agents. And that is the second dimension here that is crucial. They lose or they give up the capacity to self-reflect, to exercise meaningful moral judgment, not just an approval of a decision. And taking a cue from these historical lessons, we thought, is important for our context today. So with this in mind, we have looked at in the paper itself and its open access so you can read it. We look at episodes in history, colonial history, World War Two, of course, the Vietnam War, and the various ways in which targets have been rendered as objects, objectified and dehumanized. With objectification and dehumanization, the door to more violence is almost always opened.

The more systemic or systematic the structures that facilitate the killing, the more the targets were classified, not according to their humanity, but according to some pre-specified set of parameters that were cast in terms of a danger, a risk, a threat, enmity. And the more that happens, the greater the possibility for dispassion. applied violence. So it was in the other sense, the other dimension that we've wanted to focus on a little bit more, this moral dimension that relates to the dispensing of violence of those who work within a systematic setting. Psychoanalysis suggests that for humans to engage in acts that do not fully accord with one's own moral standards, a kind of a splitting off of cognition and affect has to take place in order for that act to be able to be done or to happen. So in other words, separating a rational thought from the emotional states, or indeed outsourcing the cognition to keep a check on the emotion needs to happen for violence to become inflicted, if you will, especially mass violence. So for analysts of violence in World War I and World War II, that constitutes a psychopathology of modernity, the splitting off of emotional states. So for analysts of violence in World War I and World War II, that constitutes a psychopathology of modernity, the splitting off of emotional states. So for analysts of violence in World War I and World War II, that constitutes a psychopathology of modernity, that's some part to play, a significant part to play in facilitating the erosion of moral restraint that we were able to witness in past conflicts and wars. To take a slightly closer look at the erosion of moral restraint, we turn to the work of, I mentioned him already, Herbert C. Kalman and his book, The Abuse of Sport and the Abuse of Inspectorium.

work on target objectification and the erosion of moral restraint. And he recognizes some really interesting things, we thought. First of all, he says, historically rooted and situationally induced hostility happens most often along racialized lines. That forms a substantive element in systematic mass killings. But he argues that's not the primary instigator for large-scale violence. Some other modes have to be at work there. So he advises us to consider, it's a quick quote, the conditions under which the usual moral inhibitions against violence become weakened. What are those factors? And in his paper, and he has written in 1973 on mass violence, he identifies three aspects, three crucial aspects. One is authorization. The second one is routinization. And the third one is this category that we're probably most familiar with, and that is dehumanization. All three work towards a complex dehumanization at large of the human within the system or at the receiving end of the system. The first of these, authorization, refers to situations in which a person becomes involved in an action without really considering the implication and kind of really not having to make a decision. As such, they're deferring to authority. They're embedded in a structure of authority and they can pass on the responsibility or the notion of moral responsibility higher up to another authority.

Through authorization, control then is surrendered to authoritative agents, which often are themselves bound to larger, often very abstract goals, which then ultimately come to transcend the rules of standard morality. For those,zeigt. tasked with the actual delivery of violence, agency is diminished or lost or abdicated to a central authority or some authority, which in turn often cedes their authority to still higher powers. And then it becomes an extremely abstracted kind of configuration, which becomes very abstracted from the moral concerns, which is concerns with other humans. The second process Kalman highlights in the erosion of moral restraint is routinization. So authorization kind of overrides otherwise existing moral concerns. Processes of routinization limit the points at which such moral concerns can or would otherwise emerge. So routinization fulfills two functions. First, it reduces the necessity of decision making. Therefore, it minimizes occasions in which moral questions are being asked. And secondly, it makes it easier to avoid the implications of the action, since the actor, the person involved in the act, focuses on the details, on the process, on which box needs to be ticked, what process needs to be followed, rather than the meaning of the task.

What is the ultimate upshot of this task? And so raising objections in this configuration of tasks and processes becomes minimized. And the third process. And the one that arguably connects most closely with the target objectification that we're familiar with and that is already discussed is this aspect of dehumanization. Processes of dehumanization work to deprive victims of their human status to the extent that victims are dehumanized. Principles of morality no longer apply to them, and moral restraints against killing are much more easily overcome. Importantly, though. This is a very important point. The same processes that degrade the moral status of the victim may also dehumanize perpetrators. These three facets facilitate the partitioning of cognition away from emotional states so that otherwise unacceptable acts can be pursued unbridled by moral restraint. Just a quick word about dehumanization. It is really one of the key features in almost all mass atrocities. There's a really fantastic book written by David Livington Smith, published in 2020, on inhumanity. It offers a really powerful and comprehensive discussion of the psychological, social, and political aspects of dehumanization and the violent upshots. And so dehumanization for Livington Smith, and this is the reason why I'm mentioning this, it's kind of an attitude.

It's a way of thinking about others. Dehumanization is not necessarily the act itself. Of course, that's the upshot of dehumanization. It's a way of thinking about others. It's a way of thinking about others. It's a way of thinking about others. Treating somebody in a dehumanizing fashion. But dehumanization is a mode of thought. It's a mode of thinking about others. It has to be installed before a dehumanizing act can happen. So it's a mindset that is installed before the violence occurs. This discussion has relevance for humans embedded in both fully autonomous systems configurations, but also semi-autonomous systems of the type that we have seen with gospel, lavender, and all the bizarrely named systems that were revealed in the PLOS 972 report in Gaza. So what we want to push back then against is some of the lines of argumentation which say that, well, humans are so awful, it would be much better, a cleaner, a more precise, a more humane war if more autonomous weapons were in the mix and more artificial intelligence, more broadly. But it's often then cast, these kinds of systems or this kind of approach is often cast by advocates of AI-enabled systems as a kind of a panacea against the worst of human perpetrated violence.

But of course, human violence and the notion of human atrocity will not go away. It will just be accelerated with these kinds of systems. So we want to think a little bit about, in this paper, how we can use this to address the problems, the issues, and the issues that we have to address in this paper, how these three aspects, authorization, routinization, and dehumanization or objectification are realized in autonomous weapon systems or AI-enabled weapon systems. And so we look at how autonomous weapons operate and take a little bit of a dive into how exactly this technological logic works. In that, AI, is a tool for pattern identification. It's an analysis instrument. So what or who the computer sees is always, always already a mere object. It's a set of data points, not a human. The computer must literally objectify, classify, categorize the target. It doesn't see humans. It sees shapes, patterns, depending on what kind of system it is, that may or may not match certain data-fied parameters. So dehumanization, routinization, and dehumanization are two different things. So dehumanization, routinization, and dehumanization are two different things. So dehumanization, routinization, and dehumanization are two different things.

So dehumanization is already always implicit here. We cannot get out of that logic. We cannot rehumanize a human target for a system. AI systems understand and identify targets based purely on object recognition and classification. And artificial intelligence itself renders the world as it perceives the world as a set of objects, as related patterns from which outcomes can be predicted and calculated or calculated and predicted. And artificial intelligence itself renders the world as it perceives the world as a set of objects, including the decision over which objects are then targeted. So the target then comes to be known as a set of statistical probabilities, wherein, and he would draw on the really great work of John Chaney Lippold, where seemingly discrete, unconnected phenomena are conjoined and correlatively evaluated. Within this process, data, behavioral data, contextual data, image data, social media data, probably medical data, and so on, are disaggregated and re-aggregated to conform to specific modes of classification. Drawing upon this data, the system calculates a systematic inference of who or what falls within a certain pattern of normalcy, which is classified as benign, or abnormality, bad behavior, potential threat, in order to eliminate that threat then.

And it's quite interesting to look at what has recently become known about Project MAVEN, and the idea that Project MAVEN should be used as a warning system to identify, and this is a quote from the presentation, bad behavior, so that that can then be intervened into. So to be intelligible to a statistical model then is to be transcoded, and it's a quote from Chaney Lippold, to be transcoded into a framework of objectification, become defined, cross-calculated as a computational, ascertainable, actionable object. This statistically categorically produced process creates a subject which, and again, this is Chaney Lippold, who cannot rely on anything unique to them because the solidity of their subjectivity is determined wholly outside oneself and according to whatever gets included within a reference class or a data set. They cannot decide. The three dimensions that facilitate the erosion of moral restraint in warfare are key features in the human machine assemblage as they relate to the processes of applying violence as such. The systems provide precisely the authority, the organization, optimized functioning that erodes moral space for concern or objection or agency, and it expands modes of control, patience, and care to begin the process of killing rather than foster restraint.

And when we take that seriously, we understand why it is perhaps there's a strong tension if not contradictory to say that killing will be more humane or warfare will be more humane with AI-enabled systems. There is always an implicit separation of cognition from affect present in the human machine assemblage but one that allows the human to become woven in as part of the machine, not just at the operational level but also at the broader level as well. Everything becomes the process and the process logic becomes prioritized. Now we're not necessarily stressing, not necessarily arguing, and I kind of want to stress that, that the use of autonomous weapon systems will inevitably lead to mass violence of the worst kind. Generally, I think that would be a counterproductive point to make, but I think it has to be taken seriously as a high, high, high risk. And then second, we're not necessarily rallying against systematization as such. Human systems are everywhere where humans organize themselves, and rightly so, including in war, systems of law are important. Systems are in some ways necessary to mitigate violence as well. So it's not about systematization at large or as such, but the systematization of violence.

Because it, has become clear in what we've seen come out of Gaza in particular, and to some degree also other arenas of conflict, of course, that the use of AI systems is not leading to greater restraint in the application of violence. On the contrary. So we should challenge the idea that AI systems or autonomous systems can be used to make war more humane, somehow more ethical, that the systematic application of war, a force in war is somehow a moral panacea to political violence, as such. Now, there's always been an interplay between humans and the use of violence. Certain technologies can be used as instruments as such, but I don't think AI enabled systems can be used in this purely instrumental fashion. And it is perhaps important, for me it is at least in the discussions on autonomous weapon systems and AI enabled systems, that we should be using AI-enabled systems as such. And I think that's a very important point. And I think that's a very important point. And I think that's a very important point. And I think that's a very important point. And I think that's a very important point. And I think that's a very important point and that we'll have a lot of That's a very important point in the future.

Yeah actually, it seems, coming to grips with what we're seeing with device Newman was there when the world economy historologists or civilization, most specifically into the 900- pase- We can analyze data, it sounds like- It's just because we begin getting increasingly related tool, we flying it everywhere and going over things. article showed with such clarity and really granular, great detail as to what we can know about these systems. And what many programs such as Project Maven and its promotional materials show is that with AI in the mix to produce valid targets, the act of killing becomes a workflow issue, hitting more targets faster, not running out of targets. It becomes a matter of solely of speed and scale and optimization, which of course is one thing per se, a manufacturing process. If you're making, I don't know, hammers or screws or whatever you want to manufacture, but it is yet another for the act of killing of humans. No peaceful future can be built on the systematic production and elimination of human targets as a primary tactic for warfare. And I think I'll leave it there. And I'm happy to say more about all of that at the Q&A. Thank you.

Thank you.