Militaries often invest in algorithmic warfare in the name of humanitarianism, to minimize civilian casualties and save soldiers’ lives. Yet Israel’s war in Gaza exemplifies a shift to necrotactics, years in the making. AI-powered targeting systems have been integral to this development, allowing military heads—in Israel and beyond—to parse operational success in terms of Silicon Valley’s metrics.

Rather than days without violence, military gains are calibrated by the quantity of targets generated, the percentage of assassinations realized, and the number of arrests carried out. Rooted in testimonies provided from intelligence veterans and an analysis of Israeli and American military strategy over the last decade, this talk outlines how technologies and ideologies imported from Silicon Valley tangle with distinct cultural shifts in military institutions to rationalize an embrace of lethality.

Read full transcript (generated by Whisper)

Sophia Goodfriend is an anthropologist and postdoctoral fellow at the Belfast Center's Middle East Initiative at the Harvard Kennedy School. Her research exhibits how big data and machine learning have transformed what it means to wage and live with war in Israel and Palestine. Her reporting has appeared in London Review of Books, Foreign Policy, 972 Magazine, The Baffler and George Currens, and in many other scholarly reviewed journals across anthropology and Middle East studies, currently working on two books about the impact of emerging technology on military conflict in the Middle East. And your talk is called The Cult of Lethality. Sophia, you have the floor. Great. Thank you, everyone, for having me. I think there's a lot of generative dialogue between my presentation and Elka's, so thank you for that. It gave me a lot to think about. My talk today looks at the use of AI targeting systems in Gaza over the last year and a few months. I'm really going to be outlining how the use of AI systems to the tragic and lethal effect that we've seen arises from particular cultural and cultural events that have been happening in the past. I'm going to be talking about the role of AI in Israel's military. So my intervention, I think, is really turning to politics and culture to understand how technological systems come to be deployed and used in the way they are. So I'm drawing from research that I've done, as you said, with Israeli intelligence veterans and upper-level commanders and research in Israeli military archives as well over the past few years. So I want to begin in a way that I think is very important and this is where I really see that the world Wahlha dollar sufferings are going to come down after

a 20threts also does a very important role in previous warning in the east and really this is a political movement as you talked about in your ensuite talk about criminal reconciliation and shared much of that down. So am I we've talked about at once. We often Francis Soros talks about this from majority one and I feel you heard I'm approaching it for a because I heard point you know they've done a great job going back in testing UK management switch, certain areas, you know. So let's know who you would like to hear more about what you would like to hear. Anyway, if you want to� Shawna, if you don't have time, go ahead and unt ra please, it's a really able audience question, meet Smith. You can't find me by chance. I've got 20- 281-9 palavras out there on the screen. when militants killed over 1,500 Israeli civilians and soldiers and took more than 250 hostage by launching one of the most deadly bombing campaigns in recent history. And Yuval's reporting really revealed how the bulk of destruction was abetted by relatively new AI systems. So one named Lavender called through masses of surveillance data of every civilian in Gaza to place people on kill lists.

And this is a infographic from a group called Visualizing Palestine that kind of breaks down how that worked. And we can talk more about how these systems work in the Q&A. But simply put, they rely on mass surveillance to basically rank every civilian in Gaza according to a scale from 1 to 100. And that helps determine who's placed on a target list. There was another system called Where's Daddy that sent alerts when these targets entered their family homes. And a third system. Named The Gospel determined where Hamas militants launched rockets or stored weapons. And so the last two determined exactly where the military would bomb. So for a military set on destruction rather than accuracy, as one senior defense officials put it in the early days of war, this suite of AI systems allowed it to operate at a quite inhuman speed and scale. Now, I should say that in Israel, the article did not garner much outrage. By April. The war had already dragged on for more than six months. And despite the destruction with over 300,000 Palestinians killed and more than a million displaced, the IDF had actually failed to achieve its stated war aims, which was to decimate Hamas and to bring the remaining hostages home.

But Yuval's reporting this article that I began with was really seen as good PR for the Israeli military. A few weeks later, IDF leaders took Israeli press on a tour of an intelligence base in southern Israel. And there they showed these suites of AI systems really in action. A senior commander at that press tour bragged that Lavender had quote revolutionized warfare by providing an unprecedented number of targets and that they had kind of broken the human barrier or the barrier in place when humans alone are kind of calling through surveillance data and generating targets for the military. So my talk today outlines how we arrived at this point. And I want to go back to specific cultural and political shifts. That's everything that's happening. And I wanted to just didnt to frame technologies in real life. I think there are also some people in Right Fathom that have collaborated and been successful and the conversions themselves over the past year that this is probably not the same kind of evidence that has ever gone against you or influenced you in any way. Just to see how thatılmış practical economic change is really and thanked for the effort.

And you mentioned that neuroinformatics and just pioneering equals one another. And I think you were mentioning images of intervention, of production, of performance of processes that at a quicker rate end in cooperation. Or mira, or two states in terms of matching those efforts. But what do you recognize when you семьe with those. What do you show as the minutes that you show in an interview with an agent and yesterday? Think about it. Yeah. Yeah, I? influence the political and cultural context in which they emerge. So I'm drawing here from many scholars of science and technology studies who remind us that algorithms undergirding systems like Lavender are socio-technical systems, which is to say they're made and remade by people, markets, ideologies, and technical infrastructures that subtend them. So when I'm talking about cultural and political shifts, I want to go back to about 10 years. In 2016, an Israeli soldier named Elor Azaria, who was a combat soldier deployed to patrol the city of Hebron in the occupied West Bank, shot and killed a Palestinian man who already lay immobilized on the ground. Now, Azaria was killed, sorry, Azaria was tried in an Israeli military court for this shooting. He was tried for manslaughter, which was a very rare occurrence to try an Israeli soldier for manslaughter of a Palestinian. The trial sparked historic protests in the United States, and the trial sparked historic protests in the United States, across the country. Tens of thousands demonstrated outside of Israeli army bases, in public squares, in major cities, in front of government buildings, and the cause of their outrage was quite clear. They decried the military as weak, and they said that the army appeared more intent on saving Palestinian lives than protecting the lives of Israelis, even its own soldiers like

Azaria. Scholars of Israeli militarism like Yagya Levy and Rebecca Stein often hold up with what was come to call the Azaria affair as a tipping point, a moment when Israel's traditional military establishment, which is often seen as centrist, secular, and more or less rule abiding, was losing its authoritative grip on the rest of society. The ideology of Israel's radical right, which had long championed deadly force against Palestinians, was moving more from the margins to the mainstream. And to shore up its legitimacy among an increasingly right-wing populace, Israeli military heads began actually promoting more deadly tactics in the occupied Palestinian territories. Soldiers were instructed to shoot to kill, drone strikes in the West Bank and Gaza became more frequent, and military spokespeople actually began publishing lists of Palestinians assassinated after every major operation to kind of show that they were embracing more lethal tactics. Now, these policy changes marked a shift from the IDF's old policies in the West Bank in the first few decades of the 21st century. And so, as a result, the IDF's policy changes marked a shift from the IDF's old policies in the 21st century. Since the early 2000s, Israeli military heads had been promising a progressively technological occupation would make Israeli military rule more humane for Palestinians. And this is, you know, part of larger discourses that we've touched on today, mythologies that innovations in algorithmic warfare and surveillance are more humane because they're more precise. Similar statements were made in Israel during those years, digital and then automated technologies from reconnaissance drones to biometric cameras and other kinds of remote sensing systems had promised to effectively manage Israel's occupation. Military innovations were said to reduce the number of soldiers deployed to combat and minimize the intrusiveness of military rule for Palestinians. But by the late 2010s, violence in the West Bank was rising, and right wing Israeli politicians were gaining unprecedented political power. They and their supporters were quite sick of these promises of humane military strategies that would pave the way for

gradual peace plans, even if those plans were never going to materialize. And, you know, there's debates about that. But simply put, the rising right wing demanded more brutal military tactics, more violent displays of force and more lethal outcomes. Now, the army did meet these demands. When Aviv Kochavi, who's depicted here on the right, he's the current head of the IDF, he took power in 2019. He pledged to make the army into a lethal, innovative and efficient fighting force. Those were words that he said in his entry speech when he took on that role. So the words lethal, innovative and efficient offered a string of vague doctrinal concepts that appealed to different sectors of a fractured Israeli society. For the right, which was gaining influence, the word lethality carried a certain kind of power. It evokes the brutality of a military that sees killing rather than viable peace plans as the principal metric of military success. But the word innovative carried a certain kind of power. It evokes the brutality of a military that sees killing rather than viable peace plans as the principal metric of military success. But the word innovative carried a certain kind of power. It evokes the brutality of a military that sees killing rather than viable peace plans as the principal metric of military success.

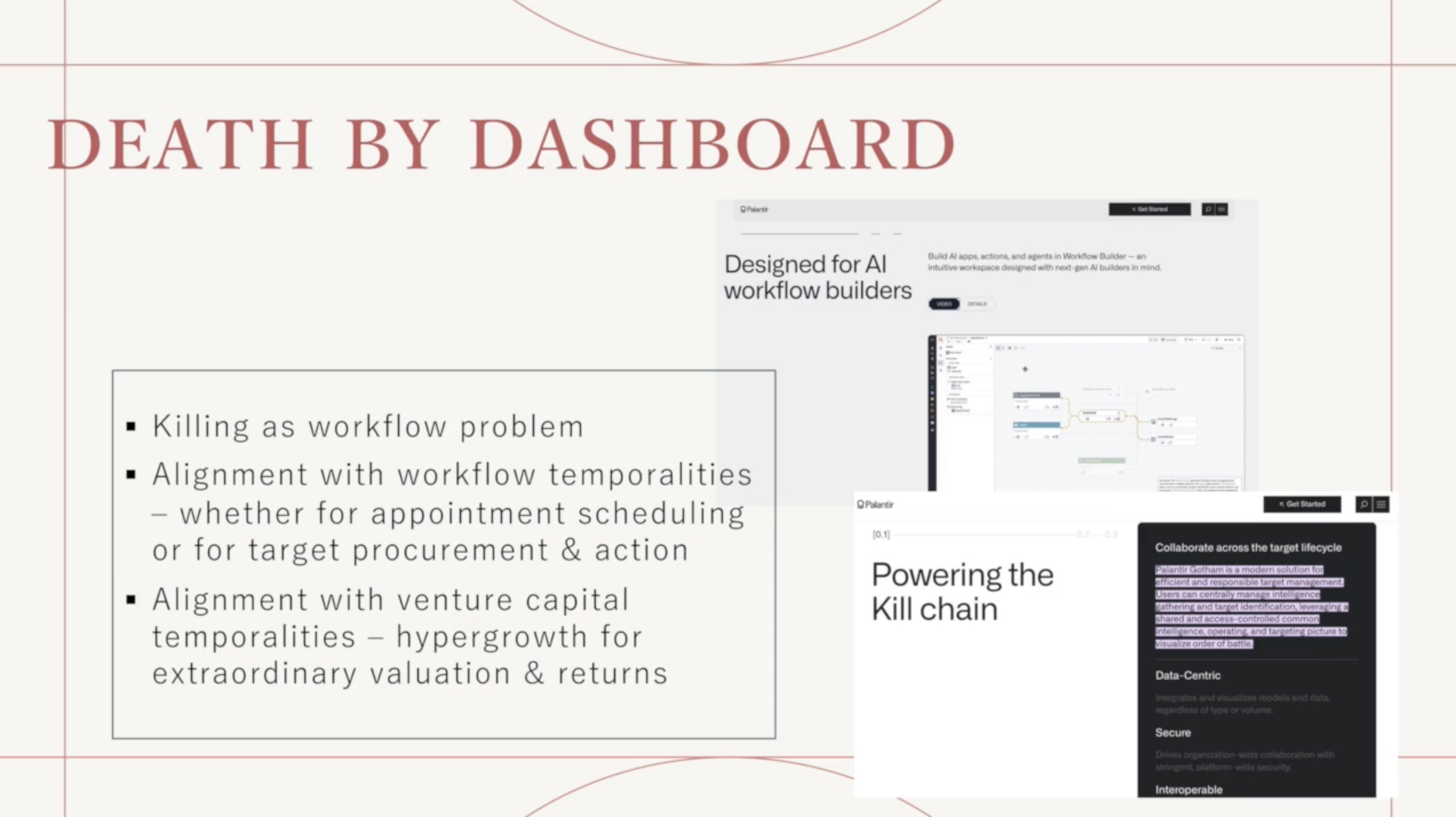

But the word innovative carried a certain kind of power. It evokes the brutality of a military that sees killing rather than viable peace plans as the principal metric of military success. But the word innovative carried a certain kind of power. It evokes the brutality of a military that sees killing rather than viable peace plans as the principal metric of military success. It was really part and parcel of global trends that we've kind of gestures to and the presentations that came before me. So worldwide at this time, military is long obsessed with Silicon Valley's mode of production, returning to big data analytics and machine learning to really scale up their killing capacities. In 2018, for example, the US Department of Defense made the restoration of lethal force a key concept in its official national defense strategy, touting innovations in the military and the military's ability to fight. In 2018, for example, the US Department of Defense made the restoration of lethal force a key concept in its official national defense strategy, touting innovations in the military and the military's ability to fight. From, you know, Palantir surveillance models to Google Cloud computing to AI-assisted drone swarms as really key to these efforts.

The writer Jared Keller described it as the Pentagon's new cult of lethality. So that's where I'm taking that phrase. And I think cult, you know, it has a specific connotation. It connotes kind of irrational veneration or a kind of crazed devotion, misplaced faith. I think it's an apt descriptor for militaries obsessed with really optimal, minimizing their killing capacities. As the scholar Matthew Ford puts it, quote, Lethality preserves the fiction that science both offers certainty in war and underpins the utility of military operations. So in my writing, I draw on scholars of digital and algorithmic warfare that I've cited and more as well to outline how the embrace of lethality makes militaries mistakenly believe killing can be mastered like an exact science. Innovations. And machine learning and data science allowed Israeli military heads to articulate killing as an engineering problem. And this is actually a collection of slides given by the commander of data science and AI within the unit 8200, which is Israel's intelligence unit in February 2023, which really broke down the internal logic of targeting systems that came to be deployed in Gaza. He gave this he gave this talk at a tech conference for venture capitalists and technology CEOs where he ended up inadvertently.

leaking really classified information about how these systems worked. And I think that's a point that kind of tugs at what Elko was talking about in terms of militaries obsessed with with Silicon Valley ideology and kind of trying to speak to these to these crowds. But, you know, when killing is parsed as this kind of exact science, soldiers responsible for refining these systems are really also encouraged to reduce human life into data points, viewing them as inputs to an equation that they must optimize like any other system. And this is a point that I think that's really important. And this, you know, has resounding implications, as Elka has has said, for how soldiers view the ethical implications of waging war. And we can we can also talk more about that and keep that conversation going. But it also upends military strategy as a whole. So militaries obsessed with engineering better ways to kill will rarely wonder if such tactics are effective in the long run. It creates, in the words of John Lindsay, who wrote an excellent ethnographic account of the use of similar AI assisted targeting systems in the U.S. war on terror, which Lucy references in a paper.

It creates, as he put it, a, quote, feedback mechanism that inhibits self-evaluation, which means that the means of war, more data, better algorithms, heftier contracts with Silicon Valley subsume any other aims. And this has really been the case in Israel. My research outlines how iterative models undergirding targeting systems gradually came to shape Israeli military strategy. Intelligence units were put. In the service of engineering and refining ever more lethal weapons. So by the mid 2010s, when the army was embracing lethality quite publicly because of these political shifts, soldiers were actually given incentives to experiment, evaluate and refine targeting systems, tinkering with speech to text software, predictive models, forecasting systems. Others were encouraged to abandon more classic intelligence methods and military strategy as a whole came to mimic the loop of experimentation. Evaluation. And feedback that shape algorithmic systems. Now, I don't need to tell you that the limitations of these strategies was come to a head on October 7th when the Israeli military tragically failed to prevent Hamas's attack. Israel's intelligence units were caught more or less blindsided, even though there were plenty of warnings that something was afoot. And many military analysts within Israel kind of cited this romance with technology and over reliance on AI systems.

But politicians quite publicly promised to exact as much destruction as possible. We've seen many of these statements kind of quoted in Israeli press and international press since the war began. So intelligence units were actually put to the service of just churning out as many targets as possible, using AI to inflict a campaign that was kind of framed as a campaign of vengeance, one of destruction rather than accuracy. So reservists I spoke to who served in intelligence units put it this way. And here I am quoting them about kind of the atmosphere within intelligence units after October 7th. Those I spoke with some of this was in a London Review of Books article that I wrote. They said that, you know, I should be clear after the seventh they wanted to bomb as much as possible. So they let the machines do it or they wanted to show the government some sort of success. So they would shout, bring me more targets. And I think these kinds of statements that give an inside look into what it was like to actually use these systems after October 7th are really important. It shows how military heads, you know, really intent on inflicting as much damage as possible set about just optimizing their killing capabilities.

So we saw this in specific decisions military heads made, which Yuval's article references and uncovers in a lot of detail. Military heads ordered soldiers using systems like Lavender to lower the thresholds used to determine who or what constituted a viable target. They told soldiers to actually raise the number of civilians. They told them to raise the number of civilians that could be killed in targeted attacks. And they gave soldiers as little as 20 seconds to sign off on each strike. Meaning many of these tools were made to function almost as fully autonomous weapons where the human kind of intervention and oversight was quite minimal. So these were decisions made by a military that was really set on maximizing the outcomes of lethal AI systems above all else. And so I kind of framed this talk with the provocation that algorithms are in black boxes, which is a common refrain within critical algorithm studies and technology. So I kind of framed this talk with the provocation that algorithms are in black boxes, which is a common refrain within critical algorithm studies and technology. Rather, they're sociotechnical systems arising out of and coming to shape particular social and political contexts.

I think this framework is helpful for demystifying the AI weapons deployed in Gaza and beyond. And I really wanted to underscore how in Israel and embrace of big data and machine learning to such lethal effect arose out of cultural and political shifts in the military, it really offered a way to shore up the legitimacy of the army to retain its monopoly on violence amidst unprecedented divisions and demographic changes. changes. From the mid-2010s onwards, AI targeting systems offered the military a way to build its lethal capacities to scale in a bid to appeal to a growing right-wing political base. All the same, the use of these systems lent ever more brutal military campaigns a kind of veneer of scientific legitimacy. It quelled whatever opposition there might have been among what left-wing soldiers and politicians by framing military operations as kind of scientifically rational and guided by state-of-the-art technologies. But most of all, it allowed a deadly process of algorithmic experimentation and refinement to continue without end. So I've tried to emphasize really how AI has existed in a kind of symbiotic interplay with the demands of Israel's political and military establishment. For a military that views success in terms of body counts, the logic of algorithmic processing, which is a feedback loop of data extraction, analysis, and decision-making, offers endless opportunity to refine and perfect its tactics. And for a government that sees unending war as a way to further larger ambitions of annexation and territorial expansion, focusing on these kinds of iterative, calculable processes helps them attain such goals, which is to say that a military obsessed with killing and killing more efficiently will always subordinate the supposed aims of war, aims like protecting the polity, preventing further violence, and the ability to fight against the enemy. So AI has been a great way to

create a new world for the military, and it's been a great way to create a new world for the military. So to conclude, I just want to say a few words about the implications of all this. I think, you know, militaries around the world, Israel's not exceptional in this regard, militaries around the world are using similar systems. And, you know, we've talked about how the United States, for example, is pushing full throttle ahead and incorporating AI into almost all aspects of military operations. And in response to kind of concerns about the ethics and efficacy of these changes, military leaders often assure the public that humans remain fully in charge of these systems. Here's a quote from Joseph O. Callahan, who's the leader of the U.S. military's AI targeting efforts that was printed in Bloomberg last year. He said, it's not the terminator, the machines aren't making the decisions, they're not going to arise and take over the world. And I just want to emphasize as scholars of science and technology studies have long reminded us that humans are never really fully in charge of these systems. And so I think it's a great way to kind of bring these systems together. And I think it's a great way to bring these systems together. And I think it's a great way to bring these systems together. And I think the case of Gaza and the use of targeting systems there really emblematizes how AI culture and politics exist in a far more messy kind of interplay that complicate understandings of agency and control. In Israel, an unfettered embrace of AI actually just sutured military strategy writ large to the iterative logic of algorithmic systems, which is to say that optimizing the army's lethality, building killing capacities to scale, these have become the main

aims of warfare. And so I think it's a great way to bring these systems together. And I think it's a great way to bring these systems together. And I think it's a great way to bring these systems together. And these goals may further certain demands of certain politicians, but they actually erode individual and collective capacities for overriding or intervening in AI decisions, especially in the name of real security or lasting peace. As I've tried to emphasize today, I think all the violence that we've witnessed over the past year shows us that these goals really simply ensure that bloodshed will just continue. So thank you. Unless…