As my title suggests, the focus of this essay is not drones per se, but rather the wider turn to algorithmic warfare that they enable and of which they remain a key part, from surveillance operations to targeted assassination. I want to begin by acknowledging, in homage to our conference title, that we are at a moment bookended by the first armed drone ‘kill’ using a Hellfire missile in Afghanistan in 2007 at one end, and the aspirational ‘attritable’ or expendable Replicator swarm project of the US Department of Defense currently underway, accompanied by further investments in defense against the anticipated development of similar technologies by others. The use of small, commercially available drones in Ukraine and Gaza already enacts a new form of terror for those on the ground.#armed drone

My starting point in engaging with these developments is the claim for the greater precision and accuracy of data-driven warfighting, a contention that has been shown to be tragically false in Israel’s bombardment of Gaza following the Hamas attack of October 7, 2023. To understand the fallacy of the claim we need to look more closely at the relation, and differences, between precision as a matter of the accuracy with which a weapon once launched will reach its target, and the prior – and crucial – question of the validity of the identification of something or someone as a legitimate target to begin with. In military discourse and associated media reporting the term ‘precision’ almost always refers to the first sense, the relation between the weapon and its target, while the question of how something or someone was designated as a target is left begging. But that is the overriding question if we are concerned with the lawfulness or criminality of warfighting.#precision, #data war

There is a parallel between this question and the making of ‘ground truth’ in the technical practices of Machine Learning. ‘Ground truth’ as a technical term claims to be aligned with truths on the ground, in the sense of the lived experiences and relations of persons in places. The motivating context for me in thinking these two problems together is the embrace of algorithmically based targeting by the US and Israeli militaries, a situation that concerns me both as a Jewish American and from a broader commitment to the laws of war and, in the longer term, to paths towards disarmament and de-militarization.#ground truth, #targeting

So let us start with AI, an acronym that my colleague Andrew Clement has helpfully suggested should best be expanded as ‘algorithmic intensification.’ The figure of AI, I have argued along with many others (Suchman 2023), is a highly mystifying label for practices dominated in the present moment by Machine Learning and its elaboration as ‘deep neural networks’. These computational techniques extract statistical correlations (designated as patterns) from datasets, based on the adjustment of relevant parameters according to either internally or externally generated feedback. These results are then used as the basis for automated prediction, recommendation, and decision systems#AI, #algorithmic intensification

In a paper titled We get the algorithms of our ground truths Florian Jaton observes that: “behind many algorithms lies a ground truth database that has been used to extract relevant numerical features and evaluate the accuracy of the automated transformations of inputs into targets. [the word is already there, embedded into the practice, even before we get to warfighting] Consequently, as soon as such algorithms – ‘in the wild’, outside of their production sites – automatically process some new data, their respective ground truths are invoked and, to a certain extent, reproduced” (Jaton 2017, 829).#ground truth

Ground truths and the datasets from which they are built and that they reproduce require, and largely presuppose, operations of datafication; that is, the rendering of worlds of interest as numbers.#ground truth

This becomes a problem, Lisa Gitelman (2013) and co-authors have argued, when those numbers are naturalized through the erasure of the interpretive and translation work through which enumeration, or data reduction, gets done. This argument was set out powerfully by Geoffrey Bowker and Leigh Star in their book Sorting Things Out: classification and its consequences, where they show how systems of classification work to produce, and reproduce, the objects and relations of affinity and difference that they represent or describe. “Difference,” they observe, or “distinctions among things, is the prime negotiated entity in the construction of a classification system” (1999, 231). Difference of course works always in relation to likeness: placing something into a category is an assertion of its similarity – at least sufficiently for practical purposes at hand – to the other members of the category.#classification, #data

In military discourses and media reports derived from them, ‘data’ are consistently naturalised, treated as objectively given signals emitted by the world. This glosses over a myriad of questions, including what is detectable through technologies of surveillance and what is not, and how machine-readable signals get translated into what the military names ‘actionable intelligence.’ The latter is based on assumptions about the validity of categorical modes of identification of objects, persons and so-called patterns of life, as well as massive reliance on the assignment of guilt by association, a problem that I will return to shortly.#data, #actionable intelligence

This all matters because classification is at the foundation of International Humanitarian Law, where what differentiates murder from the legitimate taking of life turns on the Principle of Distinction between those who are in combat and pose an imminent threat, and those identified as civilians – a principle that remains crucial as a resource for legal accountability in the face of its systematic violation in warfighting.#civilians, #combattants

The work of making that distinction is conceptualized in military doctrine as the result of a process named ‘the kill chain,’ the procedures through which persons become targets for the use of lethal force. The canonical OODA loop – Observe, Orient, Decide, and Act – is now updated to place sensor data at the origins of the procedure now labelled F2T2EA or ‘Find, Fix, Track, Target, Engage [the euphemism for kill], and Assess’.#kill chain

While these are its latest instantiations, the data-driven targeting imaginary has a longer history dating back at least to US operations in Southeast Asia, articulated in 1969 by General William Westmoreland, then Chief of Staff of the US Army, who offered these thoughts over lunch: “On the battlefield of the future, enemy forces will be located, tracked, and targeted almost instantaneously through the use of data links, computer assisted intelligence evaluation, and automated fire control. … I see battlefields on which we can destroy anything we locate through instant communications and the almost instantaneous application of highly lethal firepower. … With cooperative effort, no more than 10 years should separate us from the automated battlefield” (Westmoreland 1969). While the US effort to implement this fantasy on the Ho Chi Minh trail in Vietnam, Operation Igloo White, was an infamous failure, the holy grail of data-driven omniscience and weapon systems automation lives on. Some five decades after General Westmoreland’s vision, the US Department of Defense has built out its infrastructures of surveillance beyond its capacity to render the data generated as usable information.#data, #automation, #kill chain, #weapon

In April of 2017, the DoD announced plans for its flagship AI project, the Algorithmic Warfare Cross-Functional Team, code-named Project Maven. The announcement of Project Maven by then Deputy Secretary of Defense Robert Work asserted the urgent need to incorporate artificial intelligence and machine learning across DoD operations. Project Maven’s aim more specifically, Work states in his memorandum, is “to turn the enormous volume of data available to [the] DoD in the form of full-motion video into actionable intelligence and insights at speed” (Work 2017). The plan as Work sets it out includes an initial project focused on the task of labelling data within full motion video images generated by US drone surveillance operations, as a first step toward establishing the algorithms and computational infrastructures needed to automate object detection and classification in support of military operations.#project maven, #actionable intelligence, #data, #infrastructure

The media reports surrounding Project Maven overwhelmingly beg the question of the criteria by which objects are identified as imminent threats. We are told that 38 categories were used by those who hand-labeled 150,000 images to form the initial training data (Allen 2017), including the object ‘ISIS pickup truck’ (Peniston 2017). Project Maven raises the question of the correspondence between systems for the categorization of images of objects, in this case a truck, and how objects are incorporated into complex and changing relations and associated practices, which brings us back to the problem with which I began. As Gregoire Chamayou observed a decade ago in his book A Theory of the Drone (2014), the claims for precision that justify new investments in automated targeting systems are based on a systematic conflation of the relation between a weapon and its designated target on one hand, and the identification of what constitutes a (legitimate) target on the other. No amount of improvement in the precision of targeting in the first sense can address the growing uncertainties of target identification. This conflation is part of a campaign to deny the increasing reliance of these systems on ever more questionable forms of stereotypic categorisation of what and who constitutes a legitimate target, and the expanding temporal and spatial boundaries of what comprises an imminent threat.#targeting, #precision, #weapon, #classification

The leading justification for these systems has always been the promise of precision and accuracy in targeting, in the name of adherence to International Humanitarian Law and the Geneva Conventions. In a public plenary in February of 2021 Robert Work, then co-chair with Eric Schmidt of the National Security Commission on AI, offers this demonstration of moral reasoning: “The biggest contributor to inadvertent engagements is target misidentification … Humans make mistakes all the time in battle. And the hypothesis is, to be proven, that artificial intelligence will improve target identification, which should improve, and reduce the number of collateral damages, reduce the number of fratricides. So, it is a moral imperative to at least pursue this hypothesis” (Work 2021).#targeting, #classification

This ‘hypothesis’ enacts a familiar move, as failures of humans become the justification for further automation. Combining magical thinking with a leap of faith (named here a ‘hypothesis’), the proposition is that automated systems will somehow transcend the limits of the very knowledge practices that must necessarily inform them. But we now have ample evidence that the automation of data analytics works to reproduce and amplify the classificatory schemas that inform the data set. And nowhere is this more treacherous than when the data set is made from proxy signals, profiles and so-called patterns of life.#automation, #data, #data war

Despite its early setbacks, Project Maven is alive and well and now a DoD ‘program of record.’ In 2022 the Pentagon handed the Maven program to the National Geospatial-Intelligence Agency, responsible for processing the US’s vast intake of aerial surveillance. In an interview with the defense media outlet Breaking Defense, Vice Admiral Robert Sharp, outgoing director, said the fusion of Project Maven with his agency’s ongoing AI efforts will “give us our millions of eyes to see the unseen” (Hitchens 2022).#project maven

Maven’s promise supports the wider DoD initiative named Joint All Domain Command and Control (JADC2). In a press conference announcing that program, Secretary of the Air Force for Public Affairs Charles Pope promises that “As envisioned, JADC2 will allow U.S. forces from all services … to sense, make sense and act upon a vast array of data and information … fusing and analyzing the data with the help of machine learning and artificial intelligence and providing warfighters with preferred options at speeds not seen before” (Pope 2021).#military, #data, #project maven

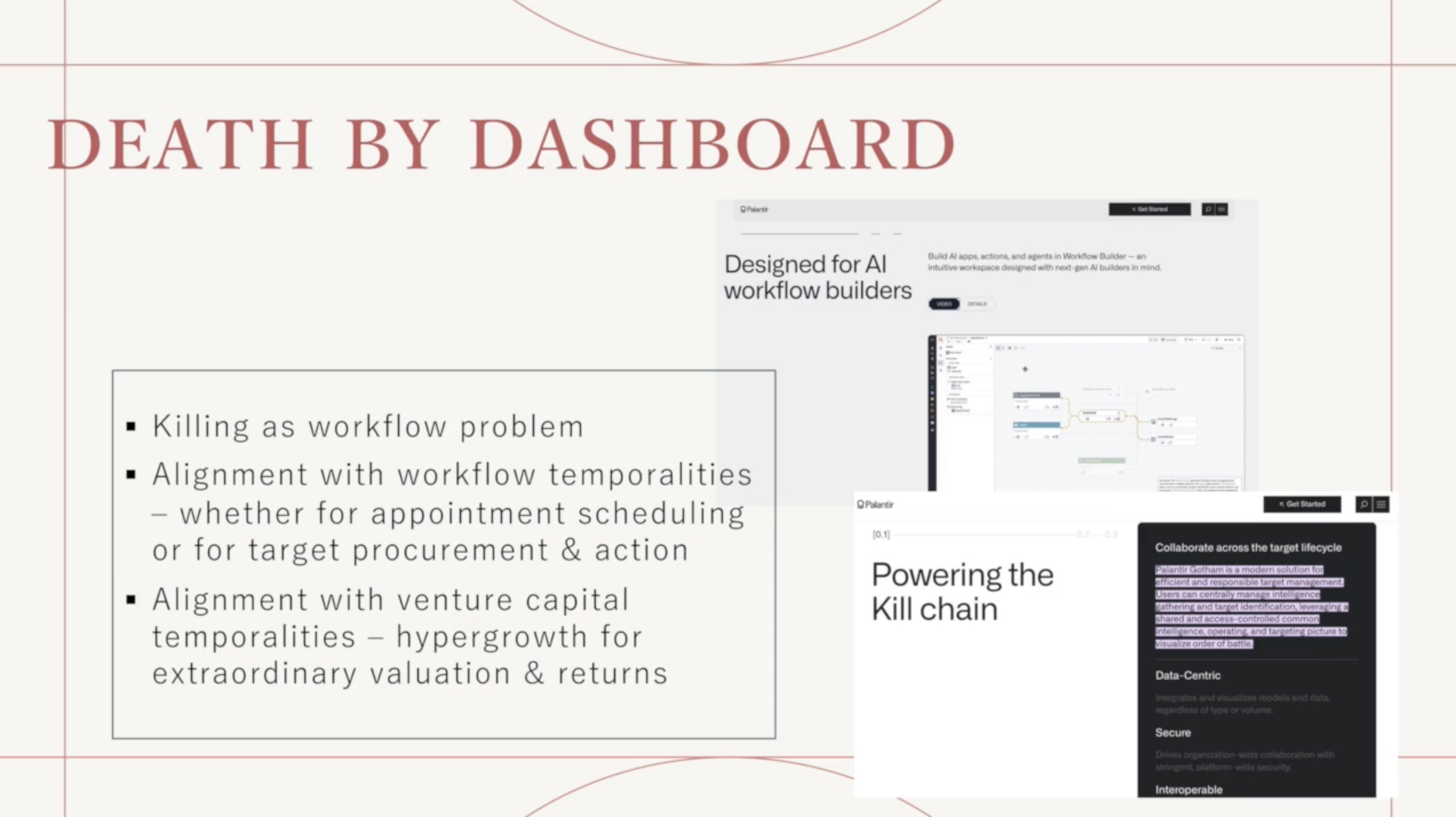

The primary developer of the newly named Maven Smart Systems is Palantir, led by self-satisfied master of war Alex Karp, earlier this year securing a $480 million dollar contract with the US Army. Command and control in the moment of Generative AI is built on top of Palantir’s AI Platform, offering access to an LLM-based back end through a dashboard that includes a ChatGPT style conversational interface (see the recent partnership of Anthropic, Palantir, and AWS). Palantir assures its military customers that the platform has been designed to activate data and models “from classified systems to devices on the tactical edge” to maintain a real time representation of the battlespace” (Palantir 2025).#palantir, #LLM, #project maven

The AI Platform and its building out into the Maven Smart System are implementations of what Judith Butler has named the ‘frames of war’ that delineate the legitimate target, along with a field of collateral damage. In her book Butler asks: “What is formed and framed through the technological grasp and circulation of the visual and discursive dimensions of war? This grasping and circulation is already an interpretive maneuver, a way of giving an account of whose life is a life, and whose life is effectively transformed into an instrument, a target, or a number, or is effaced with only a trace remaining or none at all” (Butler 2010, ix-x).#Judith Butler, #targeting

To recover those lives requires us to turn to the question of truths on the ground. Close analyses of US operations by investigative journalists make clear that the problem of civilian death in military operations is not a matter of inadequate sensor networks, or access to information, but of the frames of war into which militarism’s subjects and objects are incorporated. Claims for the precision of US operations against the Islamic State of Iraq and Syria, or ISIS, were definitively challenged in a series of articles in the New York Times by investigative journalist Azmat Khan in 2021. Khan and her team analysed over 1,300 “credibility assessments” by the US Defense Department regarding reports of civilian deaths from airstrikes that took place between September 2014 and January 2018. She confirms that rather than a series of tragic errors the reports documented failure to detect civilians, to investigate on the ground, to identify causes and articulate lessons learned, or to hold anyone accountable in ways that would prevent the recurring problems from happening again. It was, she writes “a system that seemed to function almost by design to not only mask the true toll of American airstrikes but also legitimize their expanded use” (Kahn 2021).#precision, #targeting, #errors, #civilians

Along with the records, Khan’s reporting is based on five years of on the ground investigations. She concludes: “On the ground, I found a pattern of life that was very different from the one that the military described in its credibility assessments, and documented death rates that vastly exceeded U.S. Central Command’s own numbers. I also came away with a grim understanding of how America’s new high-tech air war looks to civilians who live beneath it — people in Syria, Iraq and Afghanistan trying to raise families, earn a living and stay away from the fighting as best they can” (ibid.).#civilians

Which brings us to this image, introduced by the Israeli defense team at the International Court of Justice in the Hague, in response to the charge of genocide brought to the court by South Africa (Forensic Architecture 2024a).#Forensic Architecture

We see here an aerial view of a street in Gaza, annotated in white to show the boundaries of a building labeled ‘hospital,’ and a human figure identified as a ‘terrorist’ carrying an object labeled as a ‘rocket-propelled grenade or RPG launcher’. The white line indicates that the figure is inside the boundaries of the hospital grounds. The annotations in red have been added by the London-based group Forensic Architecture.#combattants, #Forensic Architecture

Forensic Architecture has conducted an extensive investigation of ten exhibits introduced as evidence by the Israeli defense team at the International Court of Justice, providing systematic counterevidence for misrepresentation in each, in this case the claim of Hamas’ use of ‘human shields’ by basing their operations in civilian infrastructure. Forensic Architecture’s analysis of this exhibit showed that the building labeled ‘Hospital’ is in fact a residential building outside of the grounds of the hospital complex that was subsequently bombed. We could of course also question the designation ‘terrorist,’ part of the wider erasure of any reciprocity regarding who has a right to self-defense, as well as the question of proportionality in Israel’s massive and ongoing attacks on residential buildings, hospitals, and schools in Gaza, all of which are now also refugee camps and so further prohibited as targets under the laws of war.#civilians, #targeting

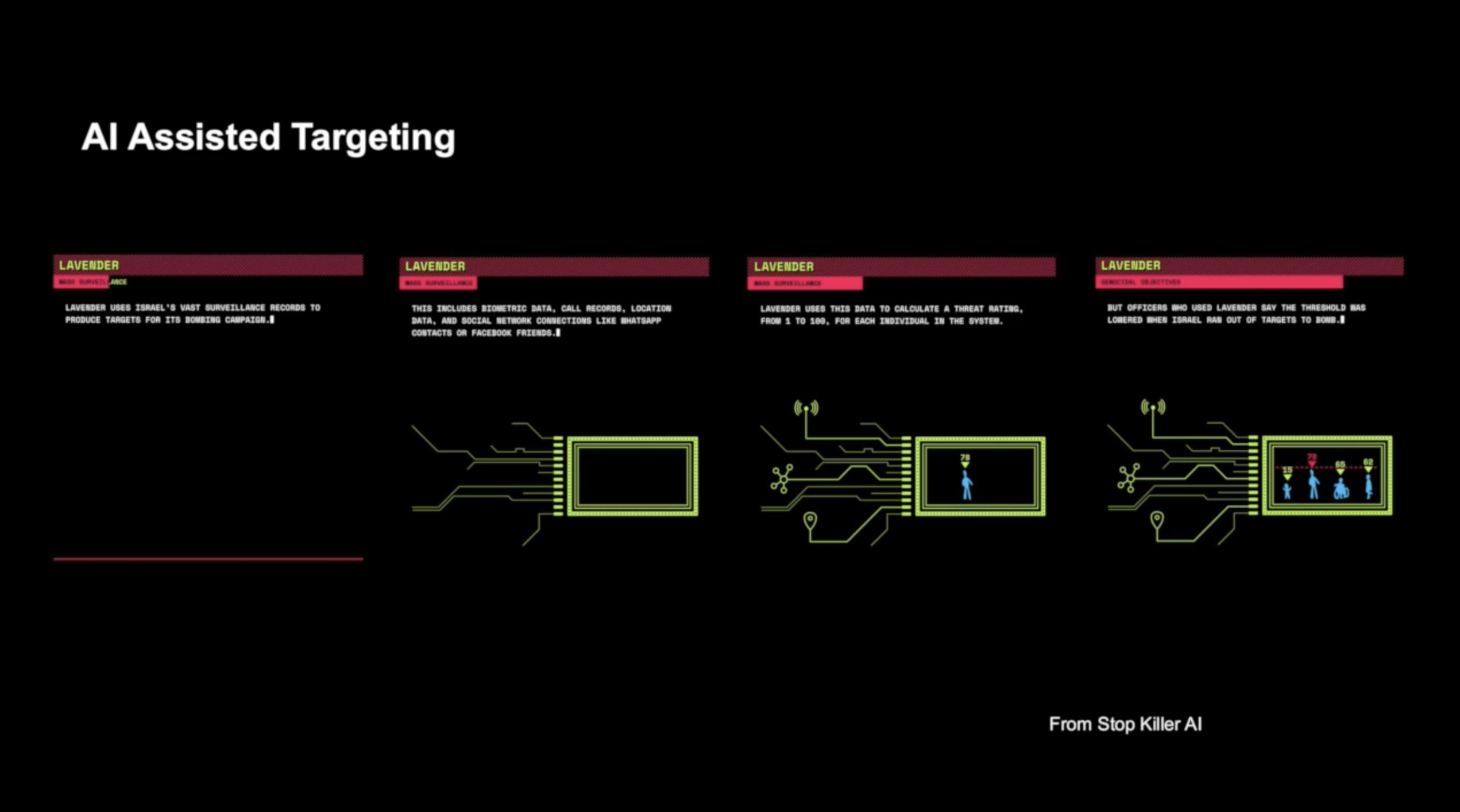

The truths on the ground of data-driven warfighting have become abundantly clear with the reported application of ML to targeting by the IDF. Journalist Yuval Abraham (2023, 2024) has given us detailed accounts of two of the IDF’s current systems, named respectively Habsora or ‘the Gospel’ and ‘Lavender.’[1] The first report, published in November of 2023, draws on sources within the Israeli intelligence community who confirm that IDF operations in the Gaza strip combine more permissive authorization for the bombing of non-military targets with a loosening of constraints regarding expected civilian casualties. This policy enables the bombing of built structures in densely populated civilian areas, including high-rise residential and public buildings designated as so called ‘power targets’. Official legal guidelines require that selected buildings must house a legitimate military target and be empty at the time of their destruction; the latter has resulted in the IDF’s issuance of a constant and changing succession of unfeasible evacuation orders to those trapped in diminishingly small areas of Gaza. A direct corollary of this operational strategy is the need for an unbroken stream of candidate targets. To meet this requirement, the Habsora software is designed to accelerate the generation of targets from surveillance data, creating what one former intelligence officer (quoted in the story’s headline) describes as a ‘mass assassination factory.’#lavender, #targeting, #civilians, #automation

The second story, published in April of 2024, describes the system named ‘Lavender’ dedicated to the targeting of individuals designated as suspected ‘low level’ Hamas or Palestinian Islamic Jihad militants. In the Occupied Territories surveillance includes massive amounts of aerial imagery (from satellites to drones), cell phone signals taken as proxies for location and movement, biometric registration at hundreds of check points including facial recognition systems, and monitoring of digital communications for targeted names and keywords. The training data for Lavender were based on ‘features’ correlated with known Hamas or Palestinian Islamic Jihad fighters, against which all individuals in the population dataset were assigned a score from 1–100. Based on these scores, the system marked some 37,000 people as probable militants and potential targets. The machine-generated targets were given only cursory review; one source explained that he would spend roughly 20 seconds to confirm from the data that the individual was male, given the prohibition within Hamas and Palestinian Islamic Jihad against women fighters.#lavender, #targeting, #aerial image, #training data, #automation

Most notably, the Israeli bombardment of Gaza has shifted the argument for AI-enabled targeting from claims to greater precision and accuracy, to the objective of accelerating the rate of destruction. The importance of this IDF spokesperson Rear Adminal Daniel Hagari has confirmed that in the bombing of Gaza ‘the emphasis is on damage and not on accuracy’ (Abraham 2023). For those who have been advancing precision and accuracy as the high moral ground of data-driven targeting, this admission must surely be disruptive. It shifts the narrative from a technology in service of adherence to International Humanitarian Law (IHL) and the Geneva Conventions, to automation in the name of industrial scale productivity in target generation, enabling greater speed and efficiency in killing.#targeting, #automation

There is a through line from Westmoreland’s vision for Vietnam, to the ‘unmanning’ of air force and the initiation of remotely controlled targeted assassination during the so-called War on Terror (2011-2021), to current investments in data-driven warfighting. Each of these rounds of techno-militarism perpetuates the myth that war can be rationalized – and by implication made more precise and, by further implication made more humane – through the expansion of infrastructures of surveillance, the pseudo-objectivity enabled by datafication, and the automation of data analysis and targeting.#data war

While we need to pay attention in the current moment to the enormous expansion of signal generating infrastructures we also, I am arguing, need to attend to that which escapes capture by datafication. The aim is to destabilise the premises through which technomilitarism perpetuates its logics of rational and controllable state violence, while obscuring its senseless and unaccountable injuries. Rather than further accelerate the speed of warfighting, we need to challenge the premise of an inevitable AI arms race and redirect our tax dollars to innovations in diplomacy and social justice that might truly de-escalate the current threats to our collective and planetary security. The United States is the overwhelming military hegemon in the world, with military spending equal to the next most heavily armed countries including China and Russia. The investments in maintaining that position extend beyond national security to an ever-expanding military-industrial-commercial-academic complex, held in place by the unexamined assumptions that underpin belief in the inevitability of war and marginalise movements away from profits for some toward just and sustainable futures for all.#social justice, #violence

[1] See Goodfriend 2024 for a synopsis and analysis of these reports in terms of their implications for International Humanitarian Law and International Human Rights law, along with the culpability not only of the IDF but also the technology providers who enable algorithmically accelerated target generation.

Note: this text is drawn from a talk delivered on 3 December 2024 in Munich at the Dronomation conference, AdBK Munich.