To say that ours are darkening times is to acknowledge, that the present moment – the year is 2025 – is increasingly characterised by familiar modes of authoritarian oppression, of right-wing political cruelty and of inevitably rising geopolitical tensions in the context of which nuclear Armageddon and the necessity for autonomous weapon systems are frequently conjured up, often in the same breath. It is a time in which hard-fought-for international law, designed to protect the innocent, is eroded, and norms for the common good are wilfully violated. It is a present in which the currency of humanity depreciates steadily while the valuations of technology companies soar. It is, as Günther Anders would say, a fully technologized world in which the primacy of products and artificial objects determines the logic and value of all else in the world (Anders 2010). Such a world is also a more violent world. The past two years, which have seen a higher civilian death toll than in over a decade, are testament to this (Sabbagh 2024).#Günther Anders, #international law, #violence

In 1964, Anders penned an open letter to Klaus Eichmann, son of Adolf Eichmann, the notorious Nazi prison guard whose apparent moral apathy toward his violent deeds is well documented and widely discussed, encapsulated in the phrase Hannah Arendt coined: “the banality of evil” (Arendt 1998). The letter is Anders’ medium to examine the roots of what he names as “the monstrous” (Das Monströse): the fact that it is possible to exterminate millions of humans at an industrial scale and with factory-like processes; the fact that other humans become leaders, henchmen and handmaidens of this process – many “stubborn, dishonourable, greedy, cowardly ‘Eichmen’”; and the fact that millions of other humans remain ignorant of this great horror, because it was possible to remain so – “passive ‘Eichmen’”, so to speak (Anders 1988, 19-20). Anders offers ‘the monstrous’ up for close examination because without doing so we are blind to the actual roots that make the existence of the monstrous possible. These roots have not ceased to exist after the collapse of Nazi terror, quite the contrary. They are not only political, they are deeply woven into all aspects of the modern technologized world we have crafted. In fact, one of the roots that makes the monstrous possible, Anders diagnoses, is that “we have become creatures of a technical world” (ibid. 14), in which we are fashioning our lives, and our selves in the image of the technological products we create.#Günther Anders, #Hannah Arendt, #automation

For Anders, writing within the context of the horrors of WWII and the latent possibility of nuclear annihilation, the techno-logical world has become so overwhelmingly expansive, that it has created a chasm between that which we are able to produce (Herstellung) and our ability to imagine the effects these products have (Vorstellung). The result is a focus on technical processes and techniques, not on the effects of technologically mediated acts on our human world. Or, to say it with another mid-century thinker, Norbert Wiener, it is a world in which a strange obsession with know-how serves as a stand in for knowing-what-for (Wiener 1989, 183). For Anders it is the persistence of these foundations that not only make a repetition of the monstrous possible, but also highly likely. His warning is that we should remain in critical correspondence with the technologies we make, so as to not lose sight of the degree to which their logic shapes our actions and perspectives, and, importantly, our ability toward moral responsibility for our actions.#Günther Anders, #Norbert Wiener, #violence, #agency

Far from illuminating our thinking, or our actions, an overly technologically mediated environment obscures human relations with the world and with each other. It is a structural condition in which the minutiae of the process inhibit the ability to imagine the magnitude of the effects as a whole. Complexity is a feature here, not a bug. It is perhaps also an ontological condition, whereby the human becomes so deeply enmeshed within the technological ecology, so fully drawn into the gravitational pull of machine logics, that all humans appear only as an assembly of information, data and processes, and the world itself becomes fashioned as a world of functional data objects, void of subjects, configured as a system, or perhaps a systems of systems, in which humanity is present, but illegible. Again, Norbert Wiener made a similar observation, in the 1950s when he warned that, “when human atoms are knit into an organisation in which they are used, not in their full right as responsible human beings, but as cogs and leavers and rods, it matters little that their raw material is flesh and blood. What is used as an element in a machine is, in fact an element in the machine” (ibid. 185, emphasis in the original).#Norbert Wiener, #complexity, #organization

What happens to ethical and political thinking in such a systems-oriented environment? And what kinds of priorities and preferences does this socio-cultural mode give rise to, especially in the context of warfare and violence? Or to put it differently, what happens when violence become predominantly ‘systematic’ violence? In an article I co-authored with Neil Renic, we examine this trajectory toward ‘killing-by-system’ implicit in the violence inflicted through autonomous weapon systems (Renic/Schwarz 2023). In the article, we explore how the logic of AI-enabled weapon systems inevitably produces a form of systems-logic for the administration of violence. With this argument, our aim was to push back against proliferating analyses that explore the ethics of AI-informed violence in operational terms that often mimic the technical analysis of risk assessments. Such approaches often justify the use of force with AI targeting systems as more precise or more humane (whatever that might mean in the context of killing), dealing largely in hypothetical assumptions about the relationship between humans, technology, war and violence and rarely ever considering that the use of AI technologies might lead to more, rather than less, violence.#violence, #AI, #targeting

Before I begin to further unpack this relationship, a brief explanation of the systems in question is in order. Autonomous weapon systems are systems which can perform so-called ‘critical functions’ – identifying, tracking and taking out a target – without intervention into this kill chain by humans. This may take on different forms of autonomy within a given system: this might, for example, be a drone, which is able to execute the identification and targeting function on the ‘last mile’ without human guidance or communication. Or it might be as rudimentary as a rifle mounted on a mobile robotic platform which is programmes to identify a specific target through facial recognition and discharge its munition accordingly. But this may also materialise as an AI-enabled system of systems, by which an AI decision system not merely recognises, but discovers, or “acquires” and nominates targets, identifies a viable connected weapons platform for attacking these targets and then executes the kill decision autonomously, without a human intervening in this action chain. This latter type of autonomous weapon system is not yet in operation, but the components to such a configuration of autonomous violence are viably in place and the call for expanded uses for AI targeting systems is swelling in military and defence industry circles. The allure for such systems – whether fully autonomous or with some nominal human decision process in the loop – is to increase the speed and scale of targeting. A 2024 report issued by the Center for Security and Emerging Technology (CSET) states that AI decision support systems are hoped “to meet a new vision of firing units to make one thousand high-quality decisions – choosing and dismissing targets – on the battlefield in one hour” (Probasco 2024). That is 16 targeting decisions per minute on which a human, or team of humans would need to make an informed decision. It is easy to see that human agency in such a configuration is necessarily marginalised with potentially dire consequences.#targeting, #autonomous weapons, #AI, #agency

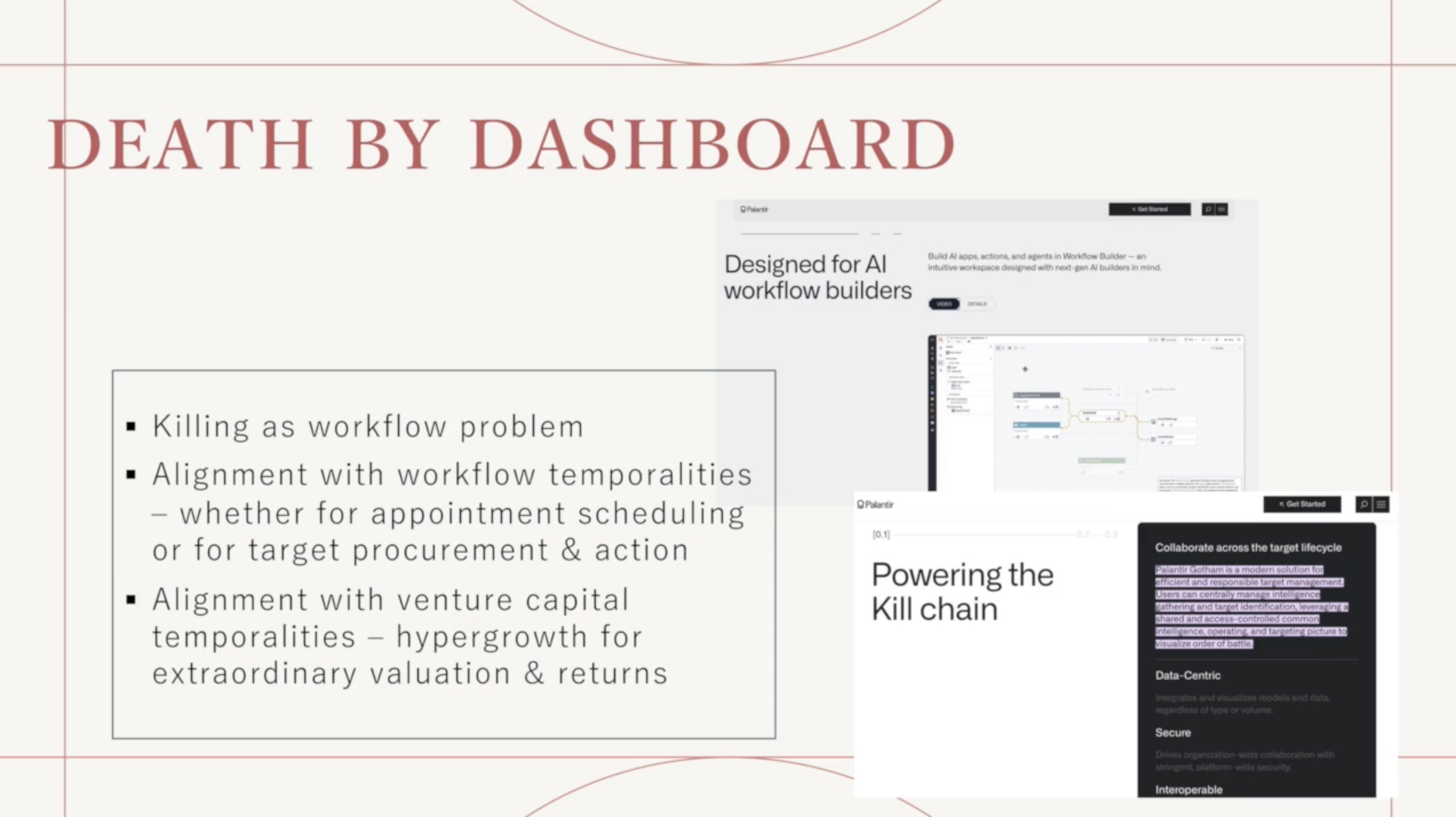

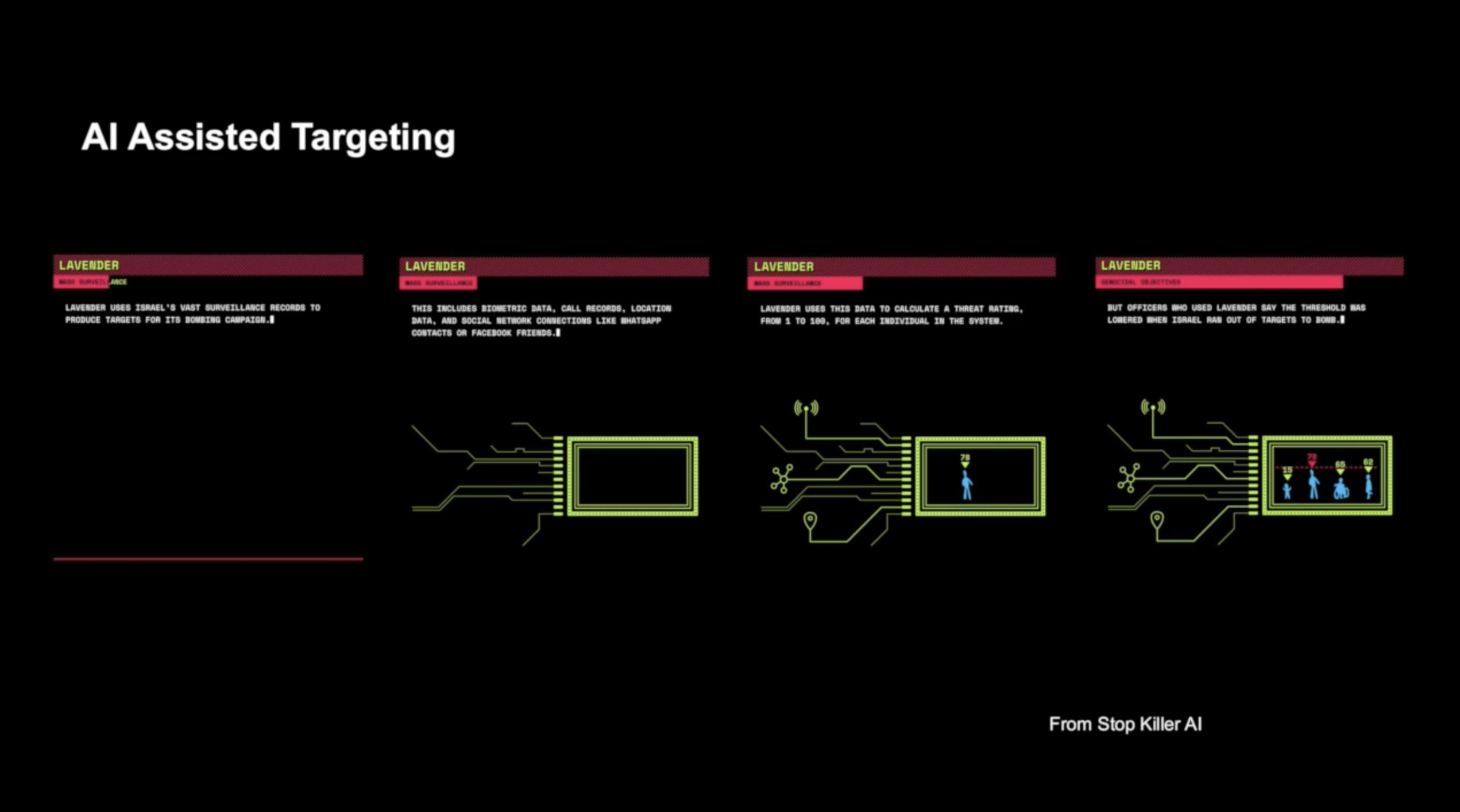

Already today, we are tragically aware that the military has likely been using AI decision support systems to accelerate the production of targets. AI systems such as Israel’s ‘Lavender’, for example, assist in discovering targets, based on a set of data parameters which have been selected to constitute a suspected Hamas operative (Abraham 2024). These parameters can be very narrow (a specific, named individual, or a very clearly defined vehicle) or very broad (a person with a particular movement pattern, network or mobile phone activities). The identified, or discovered, targets are then suggested to a team of operators who are tasked with vetting the viability of these targets within a matter of minutes, if not seconds, and then action the target nominations accordingly. In the context of ‘Lavender’ operators would “devote only about ’20 seconds’ to each target before authorizing a bombing – just to make sure the target was male” (ibid.), turning the targeting process into a crude, accelerated quasi-autonomous workflow process for the act of killing. In such an environment, the human becomes marginalised as a moral agent, they become enmeshed within the techno-logical ecology as a functional object in an infrastructure and optimisation process for assessing a statistically calculated target production line. In other words, in systems where AI plays a significant role for the nomination as to what, or who, is marked for lethal action, and where the mandate, then, is to take that action at an accelerated pace, the kill chain becomes akin to a workflow management system, driven by a form of supply chain management logic which allows for action sequences to become fully automated – if not autonomous – processes, in all but name: Death by dashboard – a necessarily systems-oriented approach, which bears some resonances with the notorious practice of ‘systematic killing’ and the history of systematic violence.#automation, #data war, #targeting, #agency

The argument Neil Renic and I put forward is that a systematise mode of killing facilitates a moral devaluation of humans in two ways. One is the rendering of those targeted as data objects, often depicted only crudely on the computational interface as red or orange squares – this is the digitised de-humanisation many anti-autonomous weapons campaigners highlight as implicit with autonomous weapons (Aboeid 2023). The second mode of humans devaluation resides in the erosion of moral agency not only of those targeted but also of those who are involved in administering the systematic violence, whose ability to understand and exercise moral restraint in the use of force becomes undermined by the systems logic. This double moral bind risks escalating the unwarranted, unjust or indeed brutal application of violence.#de-humanisation, #violence

Systematic killing – as a signifier – is associated with some of the darkest historical periods of mass violence and widespread death and destruction. It is a term laden with moral abhorrence, and to raise such a term within the contemporary discussion of lethal autonomous weapon systems, and AI targeting, may well seem like somewhat of an overreach. However, as Anders does by raising the term ‘the monstrous’, we think it prudent to examine the foundations of systematic killing in order to better understand the logic that underpins this mode of violence, and its effects, inside and outside the battlefield.#violence, #AI, #targeting, #Günther Anders

Systematisation incentivises or imposes classification and categorisation in order to fit within a pre-established typology of what constitutes an enemy, a hostile, a suspect – in short, a possible target. The targets – or those on the receiving end of violence more broadly, then, are always de-individualised – treated as an analysable object; their data collected, charted, disaggregated and reaggregated to fit within the wider brackets of the characteristics that makes up the category ‘target’, or ‘enemy’. Hannah Arendt charts this desire to know who fits within a broadly established category of “objective enemy” in the context of totalitarianism, where the Soviet secret police devise and extensive filing system through harvesting and categorisation of data about suspects. This filing system depicted the suspect (marked by a red circle) on a large card. Depicted also were the suspects known political affiliates (also marked red), their wider non-political associates (marked by green circles), friends of friends of the suspect (brown circles), and so on. These were then connected by lines to establish cross-relationship between all the circles, extending the connections and assumed association potentially infinitely (Arendt 2004, 558-559). The logical extension of this aspiration is to have data on everything and everyone, turning entire populations into a network of possible suspects.#targeting, #Hannah Arendt, #de-humanisation

Those aspects that constitute the human as a subject, with their individual experiences and attributes, are eroded in this process. The differences that always exist in a plurality of human subjects, and their relations, are fitted to the available categories, and that includes those differences that might inform any moral judgement as to whether a targeting decision is indeed just or unjust. Within the process of classification and categorisation resides always also a de-subjectification and objectification. If we look at history’s more egregious instances of mass violence it seems that with an increased systematisation and objectification, the door to more violence is almost always opened. The more systematic the structures that facilitate the killing, the more the targets were classified not according to their humanity, but according to some pre-specified set of parameters – associations, movement patterns, demographic data – that were cast in terms of danger, or risk or enmity, and, in turn, the greater the possibility for dispassionately applied violence.#classification, #violence, #targeting

The desire for data surveillance and categorisation as actionable knowledge to eliminate all possible risk – real or perceived – has always been, as Arendt notes, the “utopian goal of the totalitarian secret police”. The dream that “one look at the gigantic map on the office wall should suffice at any moment to establish who is related to whom and in what degree of intimacy; and, theoretically, this dream is not unrealizable, although its technical execution is bound to be somewhat difficult. If this map really did exist, not even memory would stand in the way of the totalitarian claim to domination; such a map might make it possible to obliterate people without any traces, as if they had never existed at all” (Arendt 2004, 560).#Hannah Arendt, #totalitarism, #surveillance

This aspiration becomes technically realisable with the technologies of concern for this essay. It is a vision which implicitly categorises humans as objects of suspicion. A terrifying vision which seems all too-plausible in the present moment: “real-time actionable intelligence” at speed and scale – such is the aspiration for AI decision support systems today (see for example Shultz/Clarke 2020). While the technological limitations in earlier totalitarian contexts reduced the scope for this objectification, the technological substrate available through algorithmic tools fosters it.#Intelligence, #decision, #targeting

When we consider how AI-enabled targeting systems function – whether they constitute an element of a fully autonomous system or act as a decision support system – it is useful to keep their intrinsic computational operating principles in mind. Artificial Intelligence is first and foremost a pattern identification and analysis instrument. It works precisely on the basis of classification and categorization for its data processing logic. A computer grasps all that is within our human world as objects – plants, cars, chairs, cats, women, men, children, tanks – which only exist as datapoints. An AI targeting system quite literally must objectify the target as it computes an incoming set of data to match a training data set, in order to find relevant shapes and patterns. To the machine, a human is a set of features, lines, pixels, parameters that constitute a model of a human as object. When an AI system identifies a human as a target-object, that human is immediately objectified. Such systems render the world as a set of objects and related patterns from which outcomes can be predicted and calculated. A target comes to be known through statistical probability, wherein “seemingly discrete, unconnected phenomena are conjoined and correlatively evaluated” (Cheney-Lippold 2019 523). Within this process, data – behaviors, contextual, visual, demographic, and so on – is collected, disaggregated and reaggregated to conform to specific modes of classification. Drawing on this data, the system produces systematic inferences as to who or what falls within a pattern of normalcy (benign) or abnormality (potential threat), always with a view to eliminating a risk or threat.#data war, #AI, #human-as-object, #de-humanisation

As John Cheney-Lippold explains, “to be intelligible to a statistical model is […] to be transcoded into a framework of objectification” (ibid. 524). In this process, any individual becomes defined and cross-calculated as a computationally ascertained, actionable object. The human target object is reworked as a “discrete, modular and thus incomplete” entity which works well to fit within a smooth technological functionality, but always opens the space for friction and error as it butts against the plural reality of social life and experience. The statistically produced process creates a human-as-object “who cannot rely on anything unique to them, because the solidity of their subjectivity is determined wholly outside of one’s self, and according to whatever gets included within a reference class or dataset they cannot decide” (ibid.). It is a comprehensive denial of agency to individuals, or groups of individuals caught in the cross-hairs of algorithmic war.#statistical model, #human-as-object, #agency

In war and conflict, objectification’s most likely companion is de-humanisation and the relationship between de-humanisation and violence is well studied and documented. Indeed, as David Livingstone Smith powerfully details in his 2020 book On Inhumanity, de-humanisation is a key feature in almost all mass atrocities. Livington Smith’s account of the relationship between de-humanisation and violence is powerful and comprehensive in its examinations of the psychological, social and political aspects. De-humanisation is, for Livington Smith, not so much the violent deed itself (although it is the manifestation of de-humanisation), but rather a “kind of attitude – a way of thinking about others” (Livington Smith 2020, 17). In other words, it is a ‘mode of thought’ about others, a mindset, that is installed ‘before’ the violent act occurs. A way of thinking about others as not-quite-humans (as some-thing), that needs to take hold first in order for violent actions to be justified. This self-justification mechanism is crucial as a mode to overcome “the chinks in our psychological armour” (ibid.) against the infliction of mass violence, which would otherwise safeguard against seeing other humans as having less value, as being sub-human.#de-humanisation

As humans we tend to have a psychological barrier toward killing other humans (some exceptions notwithstanding), and particularly against mass violence. In order to facilitate acts of mass violence against other humans, certain conditions and mechanisms need to be in place that override these defences. Psychoanalysis suggests that for humans to engage in acts that do not fully accord with one’s own moral standards, a separation between cognition and affect must take place. In other words, rational thought and emotional states are isolated from one another, with the latter being kept in check by the former. Such a schism is the foundation for the emergence of Anders’ active and passive Eichmen. Analysts of violence in World War I and World War II, recognised this isolation of cognition from emotion as a psychopathology of modernity and it has some part to play in facilitating the erosion of restraint (Nandy 1997). And here is where the AI targeting system comes most effectively into play, in crystallising the second strand of eroding moral restraint against killing: the objectification of the dispenser of violence, embedded in computational structures who becomes a functional element within a wider technological ecology – an element of a machine, to echo Wiener’s words; a technification of the human as an additional form of objectification, one that erodes moral agency. The same process-logic that degrades the moral status of those caught in the cross-hairs of the targeting process, then, also de-humanises the perpetrator.#violence, #automation, #agency

In our article, Crimes of Dispassion, we draw on the work of the sociologist and psychologist Herbert C. Kelman, who has analysed the phenomenon of mass violence in the 1970s. In this work, he draws out the structural and psychological foundations necessary for the erosion of moral restraint toward mass violence (Kelman 1970). In his discussion, Kelman makes it clear that the foundations for mass violence are often historically rooted, situationally conditioned and frequently grounded in racialised and ideological enmity. These foundations are important. However, Kelman identifies also three structural elements that enable the lowering of restraint to inflict mass violence thereby paving the way for the monstrous. Kelman identifies also three structural elements that enable the lowering of restraint to inflict mass violence.#violence

The first of these elements is ‘authorisation’. Authorisation is in place when a person is embedded in a structure within which they become “involved in an action without considering the consequences, … and without really making a decision” (ibid. 38). In other words, these are configurations in which the human is abdicating decision responsibility to another (higher) authority. This is amplified in environments in which the systematic deferral to other authorities is enabled – ever higher up, shifting the locus of moral responsibility ever further away from the violent act. Through structured authorization, control is surrendered to authoritative agents which are assumed to be bound to larger, often abstract goals that “transcend the rules of standard morality” (ibid. 44). For those tasked with actioning the violent acts agency becomes diffused and distributed elsewhere, and a space for rational deniability of morally egregious acts is opened.#authorisation, #decision, #prediction, #violence

The second process Kelman highlights in the path toward an erosion of moral restraint for mass violence is ‘routinisation’. Where authorisation overrides otherwise existing moral concerns, processes of routinisation limit the procedural points at which such moral concerns can, and will emerge. It reflects a configuration in which humans are embedded in a distributed systems of routines and repetition, which delimit the space for those things that falls outside of the systems logic. Routinisation is effective in two ways: first, a structural environment of routine tasks reduces the necessity for decision-making, therefore minimising occasions in which moral questions might arise; and second, such a process-focused environment makes it easier to avoid seeing or understanding the implications of the action since those tasked with action are focused on details rather than the broader meaning of the task at hand. Here, Kelman echoes Anders, in highlighting what Anders expressed in his letter to Klaus Eichman as follows: “When we are employed to carry out one of the countless individual tasks that make up the entirety of the production process, we not only lose interest in the mechanism as a whole and in its final effects, but we are also deprived of the ability to form a picture of it” (Anders 1988, 25). This moral diffusion in routinising processes has the potential to normalise otherwise morally repugnant acts and the nodes at which moral objections could be raised become minimised.#process, #violence, #routinisation

The third dimension Kelman raises is the one we are already familiar with, ‘dehumanisation’. This dimension of dehumanisation stretches for Kelman in both directions: “to the extent that victims are dehumanised, principles of morality no longer apply to them and moral restraints against killing are more readily overcome” (Kelman 1970, 48). It also affects the perpetrator of the violence who is enrolled into a system of routine processes, authorisation in the production of death, in which their own humanity is always already mediated and curtailed in significant ways.#dehumanisation, #violence

The conditions of authorisation, routinisation and dehumanisation are latent within the AI-targeting environment. The technology acts as authority, the environment abounds with distributed routinised tasks as part of the wider targeting process and, as detailed above, de-humanisation through objectification is always implicit in such AI targeting contexts. Rather than facilitate a more discriminatory or ‘humane’ use of lethal force, as some of the autonomous weapons advocates are often tempted to suggest, the AI targeting configuration has the potential to expand violence, perhaps even to foster mass violence. The reports that reach us from Gaza in which AI targeting systems seem to have played a crucial role in accelerating and expanding the application of violence may well confirm what Anders, Kelman and others have indicated in their prescient analyses.#targeting, #autonomous weapons

Certain technological logics facilitate certain perspectives and actions. Autonomous, or semi-autonomous lethal systems prioritise killing as a process. And in today’s military-technology-industry landscape, this fact is scarcely hidden in the discourses. ‘Lethality’ is the aim for technologically mediated violence at speed and scale. This much is out in the open. Producing large volumes of targets is not only primarily a workflow issue, it is the essence of the workflow approach. The mandate is to hit more targets faster; the aim is to not run out of targets, and, in the context of the Russia Ukraine war, taking out more targets reportedly means more funding for more semi-autonomous drones. It is a production process ethos that can effortlessly be transferred from manufacturing auto parts to producing dead bodies with greater speed and efficiency. The technological data processing substrate is, quite literally, the same.#autonomous weapons, #automation

No peaceful future can be built on the systematic production and elimination of targets as a primary tactic of warfare. If this ethos is not challenged more widely, we risk becoming the direct inheritors of Eichmann’s legacy. Indeed, if this ethos is instead celebrated and more AI targeting finds its way into more conflicts – as seems to be the case in our darkening times – then, as Anders warned us, it is not only possible that “the monstrous” may be repeated. It may already be on the near horizon.#automation, #violence